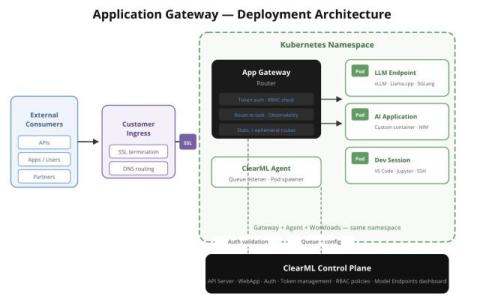

How ClearML Fits Into a Zero-Trust Kubernetes Architecture

Zero trust is an architectural principle, not a product. It means assuming breach, verifying every connection explicitly, and granting the minimum access required for each interaction. This post covers how those principles apply to Kubernetes AI infrastructure and specifically how ClearML’s security model slots into each layer: network segmentation, workload identity, access controls, and audit logging. Kubernetes AI infrastructure and where ClearML fits into the model.